April 29, 2021

by Ninisha Pradhan / April 29, 2021

by Ninisha Pradhan / April 29, 2021

Converting customers is like getting a second date.

When you’re on a date, you try to look presentable, say all the right things, hoping to swing another date by the end of the evening. You go home thinking that the date went great and that you should prepare for another glorious night out sometime next week.

But what if you haven't done enough? What if you never hear from your date?

Or even worse, what if your date came in, saw you, and then left right away? If you’ve ever had a visitor exit your website without taking any action, congratulations – you now know what it feels like when a date bails on you.

People may not always be impressed with your efforts – even when you put your best foot forward. Sometimes, they need a little nudge or a reminder, like a call or a text message, before they take an action.

With businesses becoming more customer-centric, marketers have to work harder to build a more personal relationship with their potential customers. Much like a date, customers expect to be wooed and heard. Regardless of the channels that prospective customers might interact with, their experience needs to be pleasant enough for them to take a more “favorable action”.

The question is: How do you make your brand/business more appealing to your customers?

This is where conversion rate optimization comes into the picture.

Conversion rate optimization (CRO) is a tactic that marketers adopt to improve a visitor’s overall experience on a website or ancillary channels and encourage them to take a more desirable action on the website to achieve a business goal (in this case, a conversion).

While CRO is a great practice for all types of incoming traffic, it’s especially beneficial in making the most of your existing traffic. CRO practices include, but are not limited to, page elements such as forms, buttons, or banners. From filling out a form to signing up for an email newsletter to becoming a paying customer – CRO opens up many growth opportunities.

Every CRO strategy increases the chances of a website visitor completing your business objectives. Coupled with a CRO software, marketers can turn visitors into customers.

Conversion rate is calculated as:

CRO% = (Total leads generated ÷ Total number of visitors) × 100

Every business has some goals it wants to accomplish vis-à-vis its customers or website visitors. The success of any digital marketing campaign is measured by its number of conversions. Converting a prospect into a paying customer is the ultimate goal, but there are also micro-conversion goals that marketing teams want to achieve. CRO checks all these boxes regardless of the goal’s magnitude that a business might have.

Let’s look at some of the goals marketers have in mind when interacting with a potential customer:

All of these goals can be bucketed into two categories: Macro-conversions and micro-conversions.

As mentioned earlier, micro-conversions relate to goals that fall under the spectrum of awareness and education. These could be sign-ups for an event, webinars, email subscriptions, or even invitations that get people to visit your website for the first time. Macro-conversions focus on shifting prospective customers to paying customers.

Although converting an individual is the final goal, it’s important to remember that micro-conversions can lead to macro-conversions.

CRO takes these goals into account and provides a relevant audience to marketers. This audience has the potential to become loyal customers.

CRO works largely on this principle: Addition and subtraction where it's necessary.

This means that a CRO approach could require adding a few elements like a CTA button, a better content piece, or a testimonial blurb in a certain section. On the other hand, it could also indicate things that should be removed from your page. Anything that doesn’t contribute to a conversion goal has no place on your site. Removing elements that could hinder a visitor’s experience increases the probability of conversion while also improving your website’s user experience.

Before embarking on your CRO exercise, it’s important to ask yourself the right questions. This helps you figure out your goals and how best to achieve them using a CRO strategy.

After you’ve answered these questions, you can start building your CRO plan. While CRO plans can differ from organization to organization, they all have some steps in common.

.png?width=650&name=CRO%20Stages%20(3).png)

You can’t improve your site unless you know what you’re looking for. This is why research should be the first step in any CRO plan. When you figure out the potential variables in the equation that could make or break your conversion game, you’ll know exactly what to change, enhance, and improve. To do this, however, you need to equip yourself with some data first.

Let’s go back to our date example.

Imagine you had to plan another date. This time, you’ve made an effort to learn what your date likes. A cursory glance at their social media page would tell you that they’ve frequently geotagged a forest trail, and most of their pictures are of them on a trek. Naturally, a good venue and activity for your next date with this person would be trekking on their favorite trail.

Deriving data about your visitors helps you gain insight on how they behave, what clicks for them, and what doesn’t. Several tools can fetch this data to help kickstart your CRO research. Web analytics tools like Google Analytics capture data in real time to track the website traffic, the channels that the traffic originates from, and provide insights into the audience demographics.

Another great tool that marketing teams can use to derive poignant insights is a heat map. Heat maps report how online users interact and navigate on a particular webpage. Using heat maps and in-page analytics, marketers can see how visitors move their cursors, where and how often they click elements on a page, and how long a user scrolls through the site before exiting. This is an excellent way of assessing how well the current webpage draws audience attention and what changes are required to increase conversions.

While this kind of analysis helps marketers paint a quantitative picture, they still need qualitative data to get a more well-rounded view of their incoming traffic. This is where subjective forms of data come into play. Surveys, customer feedback, and service requests raised by users can shed light on the audience’s needs and what is currently missing from your website.

|

Quantitative Data |

Qualitative Data |

|

What you normally think of when you hear “data”? It’s numbers, percentages, ratios – points that are easy to quantify. |

The other type of data involving subjective measures that cannot be easily quantified. |

|

This is objective data like bounce rate, conversion rate, click-throughs, and more. This data is pretty black and white – what you see is what you get. |

While it’s not as black and white as quantitative data, it gives you real, tangible insight into how people use your pages and what’s going wrong. |

|

It can be gathered through standard analytics tools. |

It can be collected with exit polls, email surveys, pop-ups, phone calls with customers, user sessions recording, and so on. |

With both quantitative and qualitative data, you’re looking for big holes, drop-offs, etc. Where are you succeeding? And more importantly, where are you failing? Once you start combining this data with assessments of your content, you’ll likely find countless ideas for optimizations. Now, you need to figure out the idea to pursue based on the metrics you have at hand.

Gathering both quantitative and qualitative data gives you enough material to consider before proceeding to the next step.

The next step is to make a few assumptions. Your hypothesis could be something as simple as changing the position of a CTA button, or it could be something drastic, like changing the entire content of a landing page. No matter how big or small the change is, it’s always encouraged to try different things and observe how each change fares. Any improvement in the results, even if it’s minute, could show some significant impact on the quality and quantity of your marketing qualified leads (MQLs).

View your website in a different light with the data you gathered during the research phase. After closely observing your current site with a new perspective, make notes of the areas to be improved. Maybe your images and graphics aren’t clean, or the existing CTA placements don’t make sense. Whatever pain point that you want to tackle, keep it as your hypothesis and build a case around it.

For example, during your research, you found that users browse your website extensively and use the search bar frequently. Even with so much activity on the webpage, a sizable percentage of your web traffic don’t add anything to their carts and fail to check out.

With this information, you could make an assumption that users may be looking for a specific item but can’t find it with your current website layout. The hypothesis you’d make in this instance would be: “Improving our categorization system could eliminate the extra effort for users while browsing our site.” This is a sound hypothesis to work with, and the next logical step is to redesign your categories tab to include more diverse segments.

It’s important to note that sometimes your hypothesis doesn’t always produce the desired result. Thus, it’s only a hypothesis and not a conclusive finding. Hypothesis testing also becomes crucial at this stage.

By now, you have data under your belt, you understand what your current numbers mean, and you’ve built a set of hypotheses that you believe will improve them. It’s time to test these hypotheses and see if they’re validated or not. You may even encounter new hypotheses at this stage.

This is the trickiest part of the process, with the most potential for wins and losses. If you do it right, you’ll learn to improve your conversion rate. If you do it wrong, you could end up implementing an idea that’ll actually hurt your business in the long run.

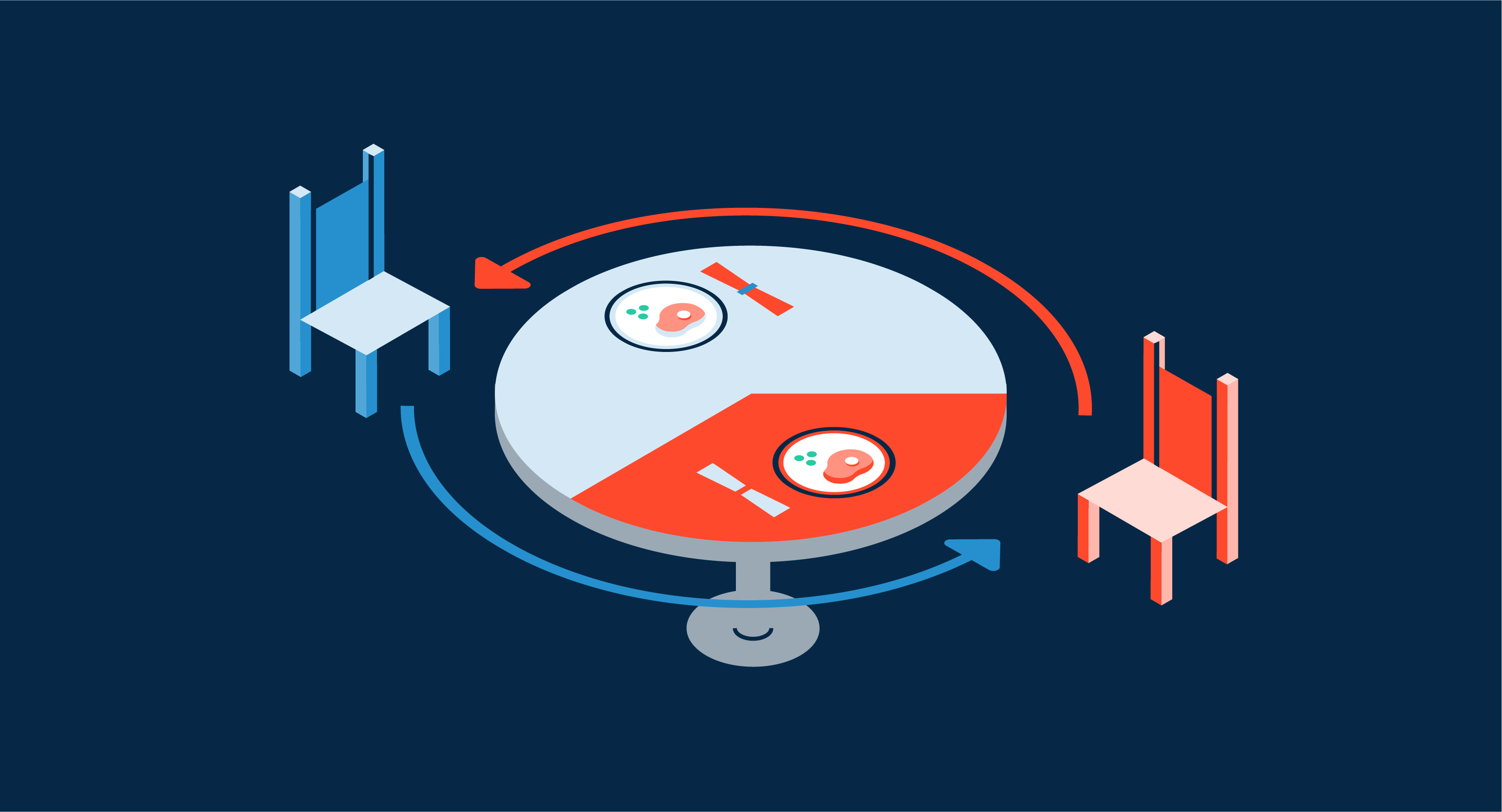

While the testing process can go many different ways, there are broadly two methods of testing hypotheses: A/B testing and multivariate testing.

A/B testing: Almost every marketer has encountered some form of A/B testing, or split testing, in their line of work. This method tests your original webpage (or the “A” page) against the page with variations (or your “B” page). In such a test, a sample group of visitors is randomly assigned to one webpage version to ensure there’s no bias based on any differentiating factor. The results of this test should indicate which page performs better for a particular goal.

A/B testing is a great litmus test for any marketer, helping them set benchmarks and validate their hypothesis across different versions of their pages.

However, what can’t be learned through A/B testing is why the page improved. Was it the new layout? The new headline? The letter-style copy? Unless you conduct an A/B test on each element one at a time (headline 1 on page A vs. headline 2 on page B), you’ll never really know.

But, for most businesses, testing one page at a time is a wasteful method. At the end of the day, does it particularly matter why one page beat another? Or will you be satisfied with a page that performs significantly better regardless of the reason?

Multivariate testing: This type of testing focuses on very specific elements and features within a single page, as opposed to A/B testing, which compares results across different pages.

Imagine you had to launch a new page on your website for an upcoming webinar. Your current headline is “Webinar: How to Get More Leads”. Now let’s say you wanted to experiment with a different headline that reads, “Getting More Leads in a Crowded Market - Learn From Experts”. All you want to do is test these headlines.

This is an example of multivariate testing. This kind of testing works really well when you want to make small tweaks and see which change maximizes your page’s potential.

By the end of this test, not only will you understand which combination is most effective, but also the interactions between each of the elements and how they contribute to your goal. The downside to multivariate testing is that it’s not as straightforward as A/B testing. It also requires a lot more traffic to complete testing small elements in order to validate your hypothesis.

It is necessary to establish and validate your hypotheses, but CRO is a method that, to get right, takes constant attention. Just one test can take weeks or months to run. And even if it produces a positive result, it won’t move the needle as high as you’ve been led to believe.

To produce a marked improvement that significantly impacts the bottom line, you need more than scattershot one-and-done tests. You need a strategy.

Let’s dive deep into each of the strategies you can use.

“Buy a pair of these shoes.”

Would you be compelled to buy them? Probably not.

“Quick! Buy a pair of our limited edition sneakers! Only 2 left in stock!”

How about now? You’d probably be more inclined to buy them than in the previous example.

How often did you purchase something just because everyone around you was buying it? Don’t be shy, we’ve all done it at some point in our lives. We like being on top of trends. Feeling like you may have missed out on something is a human emotion we experience from time to time – one that we try to avoid acutely.

It sounds pretty corny, but it affects how people make buying decisions. If you were to visit Amazon right now and search for flashlights, you’d find some flashlights with a “Only few in stock” message displayed below the item. What this message informs a shopper is: “This item is popular and trusted by people compared to the other brands.” If you weren’t picky about the kind of flashlight you wanted, you’d most likely go for the brand that has few in stock.

This tactic is evident with businesses hosting events or webinars. If you see an ad for a webinar on social media, you’ll scroll away from the post unless you were really interested in attending. If you come across another ad for this webinar that reads, “Sign up now! Only 10 seats remaining!” you’ll consider attending this webinar since it seems like peers in your industry see a lot of value in it.

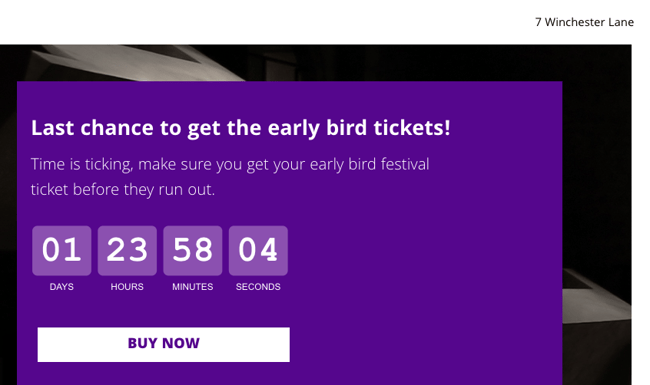

Source: GetResponse

GetResponse adds a timer that counts down the days remaining before their festival starts. It’s quick to alert that the registrations would close soon and prompts you to register as soon as possible. As you watch the seconds tick by, it invokes a strong sense of urgency and visitors find themselves inching toward the “Buy Now” button.

However, this doesn't always work. Seasoned buyers have realized that businesses use this as a gimmick and that in reality there may not be such high demand for an item or service. The key here is to be authentic. People are smart enough to smell a marketing ploy when they see one. Using elements that create a sense of urgency should be used sparingly and honestly.

Your page is an extension of your business. This is where visitors have their final interaction with your business before moving further in the funnel. It makes perfect sense to add elements that could nudge these visitors toward conversion and turn into leads, as opposed to drop-offs.

The most common page element used for conversion purposes is the humble lead capture form. While it’s imperative for any marketer or business to generate leads, visitors often dread filling out the form for fear of giving away personal information and being bombarded with promotional calls and emails later. Nevertheless, this is one element that still serves its purpose and if done well, it can result in a far better conversion rate.

CTA button is another element that’s often included but not always thought out well. The placement of a CTA button can really change the results on your webpage. Adding the CTA button to the very bottom of your page may risk the CTA being skipped by visitors who may not scroll down far enough. On the other hand, placing the CTA button in the middle of the page can be construed as a negative experience for some users. By running A/B tests for different button placements, you can figure out what works for your page and your users.

Page elements don’t have to be limited to just CTA buttons and forms. Other elements like tasteful image placements and little blurbs or quotes enhance the page experience, add value, functionality, and propel users to take the desired action.

Source: Betty Blocks

Betty Blocks hits the nail on the target with this simple yet succinct section on their website. Rather than having only a CTA button that says “Read case study,” Betty Blocks adds an image of the company’s CEO whom the case study is about, the company logo, and simple copywriting to pique a visitor’s interest. This is a great example of how a CTA button, paired with other elements, can improve the probability of someone clicking through.

Imagine you work at a bank.

Let’s say you had to explain to a kid what you do at work. You start rambling about credit analysts, throw in the words “KYC'' and “amortization,” and discuss credit policies and EMI schedules. By the end of your monologue, this kid would wander off, not understanding a thing.

It’s not that your content wasn’t good. On the contrary, you made it sound so complicated that the target audience (here, the kid), didn’t take anything away from it. Now imagine if this was a customer. That loss feels way worse.

Company websites, especially those that work in a technical industry, often fall prey to complicated-sounding web content. As a result, prospective buyers who are only looking for a particular solution, end up walking away because they can’t make head or tail of the information you have at hand. If your content fails to inform someone, that isn’t good content.

High-action pages (such as landing pages, or homepages, with opportunities for micro- or macro-conversions), should have simple copywriting and messaging. No one should be carrying a dictionary while they’re shopping. Your content should be crisp, quippy, and convey the customer value proposition immediately.

Source: Mailchimp

Mailchimp uses minimal copy and hits three key points here:

Leave the heavy jargons to the textbooks and research journals. You’re here to create value for your customers.

Humans are visual creatures. A single picture could explain five lines of text, and in a fraction of the time it takes someone to read a paragraph. It suffices to say that adding images is a good tactic to increase your click-through rate.

While most web pages do include images, there are a few things that businesses get wrong. It isn’t enough to add an image and call it a day. Every image that you include on your site must serve a purpose. Beyond aesthetics, does your image inform, educate, or simplify the process or product you’re trying to sell? Also, is your image clean and of high quality? Nothing turns a visitor off more than a pixelated, blurry image. This creates the impression that what you’re selling is cheap and inauthentic.

Stock images are sprinkled everywhere – from banners to pop-ups. These types of images adorn several pages and also tackle the presentation issue. The drawback to stock images is that, well they’re stock images. We know these are posed pictures and can sometimes come across as fake.

A better alternative would be to create your own images, play around with vectors and your brand colors. Not only does this fit your brand, but also gives a sense of authenticity and allows you to create relevant assets that tie a page altogether. This is a time-consuming exercise, but the results really make all the difference. Another good option is to use screenshots of your product in all of its glory.

Videos are even better.

Images are static and can only explain so much. Videos, on the other hand, are ripe with information and can explain what your business proposition is in seconds. People may be averse to reading, but can consume videos easily. They don’t seem that intimidating to a user. Using videos of your product being used in real time does a better job of convincing a visitor to make a purchase than reading about its 30 different features. You need to see it, to believe it.

Source: Pega

Pega uses a video to explain how their software works and what problems it solves. Seeing snippets of the product in action paints a better picture than reading a product description. With videos, prospects can envision using the product for themselves. Since buyers have a specific problem or a set of problems that they want to resolve, watching such a video puts a lot of things into perspective.

Going back to our hypothetical date example, imagine chatting with someone online. The conversation flows well, they seem interesting enough to talk to, and it’s going great. Now, imagine you meet up with them, and they’re not as charming as they were online.

This is the kind of disconnection visitors feel when they browse your website or your mobile app. You might have a beautiful website with great site architecture, but when you hop onto the mobile version of the same site, things don’t exactly match. Today, where businesses strive to provide an omnichannel experience through their software, make sure you start with your home. Neglecting your website’s mobile experience only hurts your chances of optimizing your conversion rate.

of global website traffic has been generated from mobile devices since 2020.

Source: Statista

Omnichannel compatibility isn’t limited to mobile experience. How you place your elements on the page may vary depending on the device type and its operating system. For instance, Mac users are used to seeing the ‘close window’ button on their left-hand side. Windows users see this button on the right. This shifts the way users perceive placements ever so slightly.

Similarly on mobile, users will no longer see a home button in the newer iPhone models. They‘d have to swipe in different directions to go back or go to their Home screen. Samsung users, like most Android users, are accustomed to seeing a 3-panel button at the bottom of their phones. Adding a CTA button centered at the bottom can cause Samsung users to accidentally exit the page altogether.

From these cases, it’s clear that placement is quite important. Different people in different countries follow different cues. Since devices come out with new updates and iterations every few years, it’s a good exercise to revisit your page and check the position of every element, and evaluate if you need to make any changes.

The bad news is that our attention spans have dropped from 12 seconds to 8 seconds.

The good news is that there’s still a way to grab people’s attention – through social proof.

Social proofing is a powerful strategy that offers authenticity, credibility, and ample information about your product without using elements that invoke a sense of urgency in buyers. What others say about a product matters to potential customers. If a celebrity endorses a brand, you’d be more inclined to check it out. If customers leave glowing reviews about software, you’d feel more confident about pitching it to your management.

Peppering your page with testimonials, quotes, or reviews influences a person’s behavior and triggers them to take action. They are more inclined to go through the funnel if they felt confident about your business and its offerings. Awards and accolades aren’t everything, but “Leader in this category” certainly has a better ring to it, doesn’t it?

Source: ActiveCampaign

ActiveCampaign proudly displays their badges on their page. Seeing how many times they have secured a 'Leader' position in their respective categories, instills a sense of credibility and confidence that their products and services have been vetted by others in the industry.

Most companies add a customers page on their websites, but it’s always good to have subtle reminders interspersed throughout the page. It's nice to toot your own horn once in a while.

Let’s say you lived in a neighborhood where all the houses were randomly numbered. How would you locate a house with the address, “House #23”? It’s not feasible to go to every house and check the house number until you miraculously find the place you are looking for.

This is what visitors experience when they visit a website with poor navigation. If a customer has high-intent to buy, would you waste their time by making them jump through several pages before they can even request a demo? That’s counterintuitive and you’ll only drive them further away from accomplishing your business goal. Most websites these days are peppered with “request a demo” CTAs on every page.

Navigation goes beyond placing CTA buttons wherever applicable. How your product pages appear, what they display, and the order of every page and section matters immensely. Most conversion optimization strategies involve a user experience exercise, where every element of your page is scrutinized before the website goes live.

Performing a card-sorting exercise helps gauge a site's usability. This way, you get the opinions of the people who truly matter; the customer. If it makes sense to them, it makes sense for your business. You’ll be surprised how many people prefer a button aligned to a particular side. If a visitor doesn’t see an element on their preferred side, they assume it’s not present at all. Our brains have become accustomed to seeing one side only. This is a real thing that UX designers have to grapple with before they come out with a layout.

Many visitors stumble upon your page via search engines. Understanding what their intent is gives you an idea of how they would navigate within your website. It’s imperative that your site matches these expectations.

The bottom line is that you need to make sure your navigation is clean, intuitive, and sensible. You don’t want customers to feel like Christopher Columbus, unable to navigate the waters without a map.

While people like to believe that they’re self-sufficient, sometimes it doesn’t hurt to get a little help.

There are two kinds of website visitors: Those who know exactly what they’re looking for, and those who are in the exploration stage. Both types may have a couple of questions to fast-track them within the funnel into becoming a customer.

For the first type of visitor, an individual would be very close to purchasing. Before gravitating toward the 'add to cart' button, they might want some additional information on, say, the different packages that you offer.

Let’s say you’re looking for an SEO tool. You know the end goal here is to finalize a tool and make the purchase. You check an SEO tool vendor’s pricing page and find that they have three different packages. You’re not sure about the best package for you and there’s no way to check unless you call the vendor and discuss the packages with a sales representative.

At this point you might exit the page for another vendor’s website. You just became a lost opportunity for the first vendor.

Now, let’s re-imagine this situation.

This time, the website you’re checking out has a chatbot that pops up after a minute. You use the chat window to speak with someone in real time and you manage to settle on the package you want. You decide to check out and make a purchase.

Source: HubSpot

HubSpot has a smart chatbot that answers any questions visitors may have about their product. Enforcing your page with chatbots or live support goes a long way toward ensuring that your visitors are adequately supplied with the information they need, and are in a better position to convert.

Always be in a position to answer common queries visitors might have when they visit your website.

Nobody likes long queues or long waiting times. And nobody wants to fill out a long form. People are doing you a favor by visiting your website and sharing their details. Visitors are usually reluctant to fill out anything online. In the event someone decides to fill out a form, don’t make them regret it by asking too many things. A good rule of thumb is that if you have to scroll down to see the bottom of the form, it’s already too long.

Take a look at this form:

Source: Google Hire

*Note: This Google page is no longer available.

This could easily fall under the “too long” category. There are quite a number of fields for a visitor to fill out, and the length of the form is also intimidating. If a book looked too thick, you wouldn’t consider it a quick coffee read now, would you?

Try to reduce the number of fields in a form as much as possible. Imagine you’re applying for a gym membership and the membership form is 10 pages long. You’ll have half a mind to walk out right away.

Most e-commerce sites often see shopping carts being abandoned in the middle of the checkout process. Many companies see site visitors exit without taking any specific action.

A great way to reel them back is through retargeting. Showing relevant retargeted ads nudge people to go back and remind them of what they dropped back there. Retargeting is a good online marketing strategy to help optimize your website conversions and re-engage visitors who have a high purchase intent but left without checking out.

After all, if you’re constantly reminded about something, you’d end up doing it.

Optimizing your conversion rate doesn’t promise landslide results. However, it does impact your revenue and sales cycle. CRO methods aren’t one-size-fits-all. It’s important to assess what works for your business, and adopt these methods accordingly to see any increase in the number of conversions. Persistence is the name of the game here.

Achieving high rankings through SEO can increase your website traffic. Learn how you can make SEO work for your business.

Ninisha is a former Content Marketing Specialist at G2. She graduated from R.V College of Engineering, Bangalore, and holds a Bachelor's degree in Engineering. Before G2, Ninisha worked at a FinTech company as an Associate Marketing Manager, where she led Content and Social Media Marketing, and Analyst Relations. When she's not reading up on Marketing, she's busy creating music, videos, and a bunch of sweet treats.

Conversion Rate Optimization (CRO) is becoming big business in the digital marketing space.

by Jared Gardner

by Jared Gardner

E-commerce CRO tactics promise a great deal, from increasing website traffic to growing...

by Michelle Deery

by Michelle Deery

Indecisiveness can be a killer. To thrive, you and your business must have the information...

by Stephen Hoops

by Stephen Hoops

Conversion Rate Optimization (CRO) is becoming big business in the digital marketing space.

by Jared Gardner

by Jared Gardner

E-commerce CRO tactics promise a great deal, from increasing website traffic to growing...

by Michelle Deery

by Michelle Deery