November 20, 2019

by Claire McGregor / November 20, 2019

by Claire McGregor / November 20, 2019

Online reviews have skyrocketed in importance in recent years.

A whopping 93% of consumers say that online customer reviews impact their purchase decisions. The value of reviews for customers is very clear, but what about the value to businesses?

Much work is being done to understand the relationship between ratings, reviews, and revenue. Cornell University recently found that just responding to customer reviews, especially negative ones, has a positive impact on consumers’ views of a hotel. Harvard Business School reports that when restaurants achieve a one-star increase in their Yelp rating, they enjoy a massive 9% increase in revenue.

But how do you turn reviews and ratings into revenue?

Today’s customers want to see proof that businesses are not only listening but actively making use of the feedback they receive. Voice of the customer (VoC) programs aim to facilitate exactly that—offering businesses a framework for collecting and actioning user feedback.

Incorporating your online reviews into your voice of the customer program gives you the tools to begin understanding what customers are saying at a macro level so you can spot opportunities to improve and measure that improvement over time.

In this post, we’ll explore the steps you can take to start maximizing the value you get from your reviews using VoC techniques like sentiment analysis and natural language processing. We’ll also look at real examples of how the content of your reviews can help you refine and prioritize your product roadmap.

Many companies are already collecting online reviews on more than one platform. Software companies are reviewed on G2. Restaurants are often reviewed on Google, Tripadvisor and Yelp. Hotels might have reviews on Expedia, Booking.com, Hotels.com and Tripadvisor. Mobile app developers generally offer their apps and garner reviews on multiple platforms including Apple, Google Play, Amazon, and Microsoft. If your company manufactures physical products that are sold online, reviews could be spread across Target, Walmart, Amazon, Google Shopping and countless other platforms.

Just keeping track of and replying to reviews across these platforms is difficult enough—deriving meaning from all those individual reviews is especially challenging. The human mind simply isn’t designed to assimilate large volumes of data, and it is nearly impossible to avoid confirmation bias when humans try to analyze review text manually at scale.

This is where sentiment and text analytics, both integral parts of all best-in-class VoC programs, can help.

Sentiment analysis is an analytical technique that uses software to parse a piece of text and determine whether it is positive, neutral or negative. Sentiment analysis of the text component of your reviews helps you answer questions like:

The answers to these questions can help you to determine whether the changes you are making to your product or service are well-received by your customers. Mapping shifts in user sentiment to the release of new versions of your app or changes you made to your product or service help you to understand your customers’ reaction at a macro level.

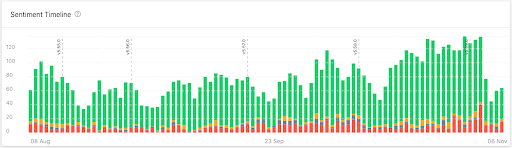

Every business hopes to see something like this sentiment timeline:

Notice that the last two version updates have not only seen a growth in total review count but an increase in the proportion of reviews that are positive.

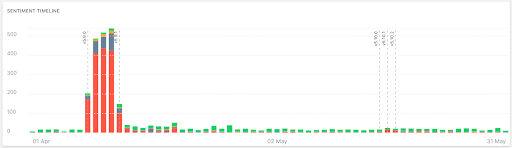

We are all aiming to avoid sentiment analysis charts that look like this data from a weather app:

Note the correlation between the dotted lines that mark the release of a product and the sharp spike in reviews with negative sentiment.

Star ratings are a useful indicator of public opinion, but they tend to present a much more positive overall picture than sentiment analysis does. Many review platforms, such as mobile app stores, offer an option for users to simply give a star rating without leaving a review. In some cases, the number of ratings on a given platform can be more than 20x that of reviews.

A recent study found that the overall ratings are on average 1.33 stars higher than the star ratings for reviews with a comment in the top 100 mobile apps. This suggests that users often use reviews to share complaints and concerns, or report bugs—all important inputs for Voice of the Customer analysis.

In addition, sentiment and star rating do not always line up. A recent Appbot analysis of 6 million app reviews revealed that 7-8% of the sample was classified as having a mixed sentiment. Mixed sentiment describes reviews where the star rating is at odds with the sentiment of the review text—a high star rating with a negative comment, or vice versa. For reviews with mixed sentiment, star ratings can be misleading.

Sentiment analysis helps us to identify trends in how users really feel. It’s important to remember that current sentiment analysis techniques are still imperfect, but determining sentiment for a review is also quite subjective when performed by humans. Machine-based sentiment analysis has the advantage of behaving the same way consistently, making it a must-have tool when analyzing reviews as part of VoC.

After using sentiment analysis to get the birds-eye view of how users feel about your company, the next step is to investigate why they feel that way.

Natural language processing (NLP) can help you do just that. NLP is a field of AI concerned with training machines to understand human languages. It encompasses many different techniques that could help you analyze reviews.

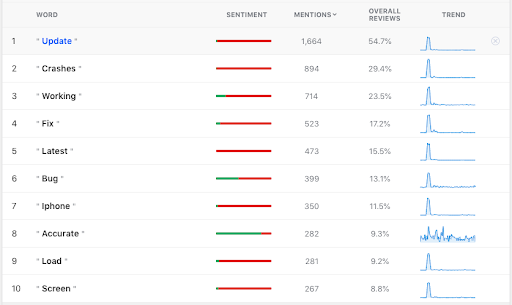

As an example, let’s revisit the weather app data we looked at above. When we use NLP to perform a keyword analysis over a defined period of time, these are the most popular words we see:

This technique sounds simple, almost simple enough to run in a spreadsheet, but there are some NLP techniques running under the hood here to make this analysis useful without much manual intervention.

These techniques include:

|

We can see above that the majority of the popular words for this app during the 90-day reporting period relate to crashes and bugs. Mentions of these words seem to be most commonly occurring in the early part of the time period, around the time that a new version of the app was launched (the same time period in which we saw a spike in negative sentiment in the graph above). If we focus on that period the numbers shift, somewhat:

Item 1 above shows that 83.5% of reviews that mention the word “Update” have overall negative sentiment. We can also see in item 2 that almost 78% of all reviews in this period of seven days mention “Update” and almost 43% mention “Crashes.”

This information can be really useful in advocating for prioritization of the investigation and repair of these issues, especially in situations in which your roadmap is jam-packed with other things the technical team is meant to be working on.

There are several other popular natural language processing techniques for VoC reporting. Some tools offer pre-fabricated topic or theme detection, grouping reviews based on the topics or themes that are common in your data set. Many tools offer the option to create your own themes or topics so you can be more specific in monitoring content themes that are important to your own product, service, or brand.

There are many other types of user feedback you can use in your analysis, and different reporting techniques we could explore, but the recommendations above are a solid starting point for getting a better understanding of VoC by leveraging reviews. This practice, along with other enterprise feedback management efforts, can help you prioritize work that matters to users and reap the benefits of higher star ratings. Your business will perform better and your customers will thank you.

Claire McGregor is co-founder and CMO at Appbot, the industry-standard tool that helps companies like Twitter, BMW, and Microsoft monitor and analyze app reviews and Amazon reviews. Appbot also offers Voice of the Customer tools to analyze data from popular helpdesk and survey tools, making it easy for app developers to understand all parts of the customer journey. Prior to Appbot Claire was Head of Marketing at Agworld, an Australian agritech company.

AI visibility platforms, like Radix or Promptwatch, have found G2 to be the most cited...

.png) by Kevin Indig

by Kevin Indig

We already went over the types of reviews you will encounter and which of them you should...

by Anatoly Sharifulin

by Anatoly Sharifulin

With the variety of touchpoints a company may have with B2B buyers, every buying journey is...

by Kamaljeet Kalsi

by Kamaljeet Kalsi

AI visibility platforms, like Radix or Promptwatch, have found G2 to be the most cited...

.png) by Kevin Indig

by Kevin Indig

We already went over the types of reviews you will encounter and which of them you should...

by Anatoly Sharifulin

by Anatoly Sharifulin